GitLab Backup And Restore Best Practices [Step-by-step TUTORIAL]

It is hard to find a developer or an IT-related person who has never heard of GitLab. It’s estimated that over 30 million users build their code in GitLab. But how to ship it securely and eliminate data loss?

In this detailed step-by-step tutorial, we will go through the GitLab backup best practices to empower developers, ops, IT leaders, and security and compliance professionals with the best tips to protect source code, eliminate data loss, ensure development and business continuity as well as meet Shared Responsibility and security compliance.

Introduction to GitLab backup

What is GitLab backup?

In short, backup is the process of creating a structured, automated copy (or multiple backups) of your GitLab data – both repositories and metadata to use in the event the original data is lost, destroyed, encrypted, or unavailable. GitLab backup is crucial for preventing data loss and ensuring business continuity – regular backups protect GitLab data and facilitate disaster recovery. You can make “backup archives” and keep GitLab data for historical purposes (i.e. due to data retention policy) or future reference.

You can store your GitLab backups in cloud storage or on your local server, whichever suits your organization’s needs and requirements. The main incentive for backing up is the possibility of restoring GitLab data in case of an unexpected event.

Understanding GitLab data

GitLab data includes repositories, issues, merge requests, and other metadata that need to be backed up regularly using a default backup strategy. Git repository data is stored in a tar file, which can be used for restoration – the backup process creates a compressed tar file archive. Existing data is erased or moved during restoration, so it’s essential to prepare directories and permissions accordingly and use a backup task to manage the process. GitLab instances can have multiple repository shards managed by Gitaly, requiring alternative backup strategies for scaling.

Does GitLab have backups?

GitLab is a comprehensive solution for DevOps, and of course, it has its own solution for backing up and restoring GitLab instances. This option is performed via GitLab rake tasks that assist with common operational and administration processes (i.e. development, integrity checks, importing large project exports, and backup). In this case, GitLab provides a command line interface to back up your entire instance, and an archive file that includes the GitLab database, all your git repositories, and the attachments. Though it’s worth keeping in mind that during the recovery process, you are able to restore data only to the same GitLab version and type (CE/EE) that it was originally created on.

Also, you should know that the rake task doesn’t store your configuration file, TLS keys, system files, and certificates. This happens because your database contains encrypted data, 2FA, the CI/CD ‘secure variables’, and others. And it’s obvious that storing encrypted information, a configuration file, and a key in the same place makes the process of encryption purposeless.

To use Rake tasks for backup purposes, you need to meet a few requirements – first, ensure that you have Rsync installed on your system, and it depends on the GitLab installation method (i.e. Omnibus package, installations from source).

But this solution has some limitations that GitLab itself highlights. We have already mentioned the first one – the need to restore the data to the same GitLab version and type.

The second is that GitLab doesn’t back up elements that are not stored on the file system. If you have object storage, GitLab recommends enabling backup with an object storage provider or with third-party backup software for GitLab.

Finally, GitLab recommends using alternative backup strategies if your GitLab instance contains a lot of repository data, it has a lot of forked projects, and the regular backup task duplicates the Git data for all of them or your GitLab instance has a problem and using the regular backup and import Rake tasks isn’t possible.

How to use GitLab backup?

Understanding the importance of GitLab backups helps in choosing the right backup strategy, including rake tasks, the use of backup archives, and backup utility scripts. Many developers consider the git clone command as a backup and create their own GitLab backup script on this basis – yet it’s not a backup (learn more why).

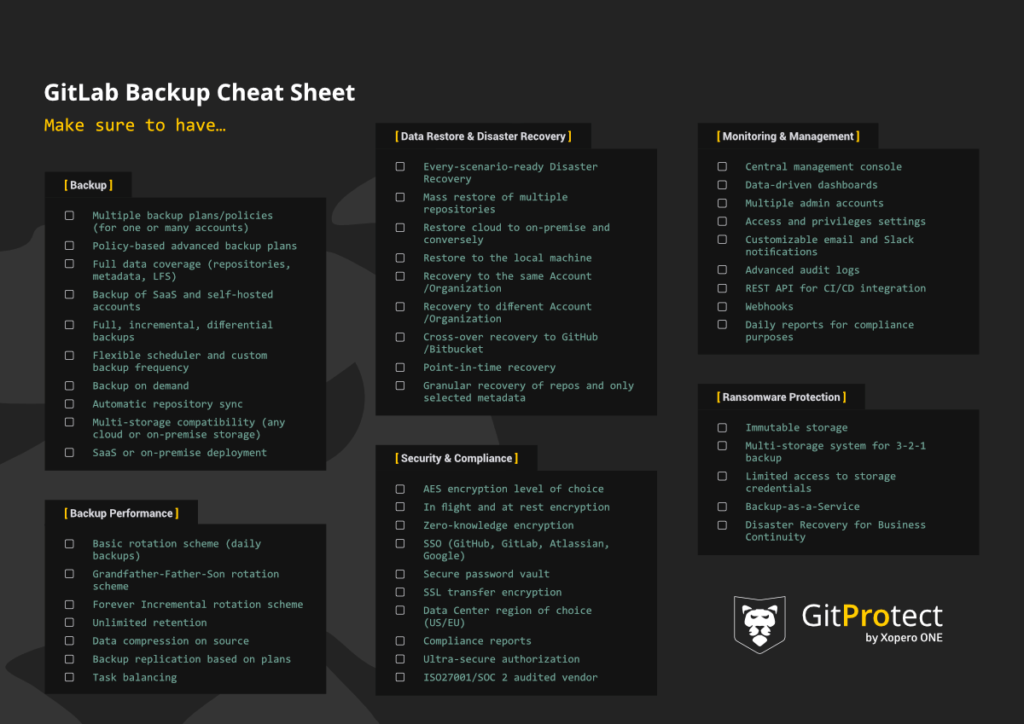

Manual scripts and rake tasks can be useful, yet they can disturb your DevOps from their core duties. At the same time, they have limitations and don’t give your organization a Disaster Recovery guarantee. In our abovementioned definition of GitLab backup, we assumed that it should be structured and automated to give data accessibility and a recoverability guarantee in the event of failure. That’s why let’s consider the most professional and recommended way – specialized, automated third-party backup and Disaster Recovery software for GitLab and the features it should provide you with. Let’s dive into Best Practices for GiLab backup and Disaster Recovery.

Backup strategies

If you want to be sure that all of your entire GitLab environment is protected well, you need to back up all the repositories with related GitLab metadata. You should remember that git is not a backup, and can’t be considered as a default backup strategy at all. So, no matter what you use, GitLab or GitLab Ultimate, your GitLab backups should include:

- Repository,

- Wiki,

- Issues,

- Issue comments,

- Deployment keys,

- Pull requests,

- Pull request comments,

- Webhooks,

- Labels,

- Milestones,

- Pipelines/Actions,

- Tag,

- LFS,

- Releases,

- Collaborants,

- Commits,

- Branches,

- Variables,

- Groups,

- Snippets,

- Project’s topics.

Always remember that to adjust your git repository data protection policy in accordance with the needs, structure, and workflow of your organization, your backup solution should permit you to create a number of backup plans.

What is the best way to achieve it? To set up a backup plan for critical repositories and metadata to track the changes on a daily basis, at least. Of course, it would be better to back up your critical data even more frequently. For this reason, it is possible to use the Grandfather-Father-Son /GFS rotation scheme and any other backup plan to keep your unused repos for future reference. For example, incremental backups are an efficient backup strategy that saves storage space and speeds up the backup process, reducing the need for regular full backups.

If there is a need, you can store your backup lifetime. Moreover, you can even delete the unused data from your GitLab account and keep the copy in the storage without overloading your GitLab account.

Save your storage space with incremental and differential backup

If you want to save your storage space, or speed up backup and limit bandwidth, it is reasonable to include only changed blocks of your GitLab data (since the last copy) into your backup tool. To say, even more, it would be ideal if you are able to define different retention and performance schemes for every type of copy. There could be full, incremental, or differential GitLab backups. Moreover, it’s nice when you can create backup archive to save storage space.

Choose the best deployment that suits you: SaaS or On-Premise

Each time you use your repo, whether it is GitLab or GitLab Ultimate, and you want to back it up, you need to run your backup tool somewhere. So, here comes the question: what to use – a cloud or to make it self-hosted in your private infrastructure? The main difference is the place where the backup restore service is installed and run.

Suppose you want to deploy it in a SaaS model. In that case, you can’t allocate any additional devices used as a local server, because the service runs within the provider’s cloud infrastructure. In this case, you should have no worries about its maintenance or administration, and the continuity of operation, because all of that is guaranteed by the service provider.

If you prefer to use on-premise deployment, you will need to install the software on a machine of your own provision and control, because it works in your environment locally. That is great when you have the possibility to install it on any computer, whether it is Windows, Linux, macOS, or even on popular NAS devices. Using this deployment model, you will avoid any problems related to connectivity to the network, you will know that all the copies are made via the local network. It will make the backup process faster and more efficient.

At the same time, please pay attention that it’s much better when the deployment model is independent of data storage compatibility.

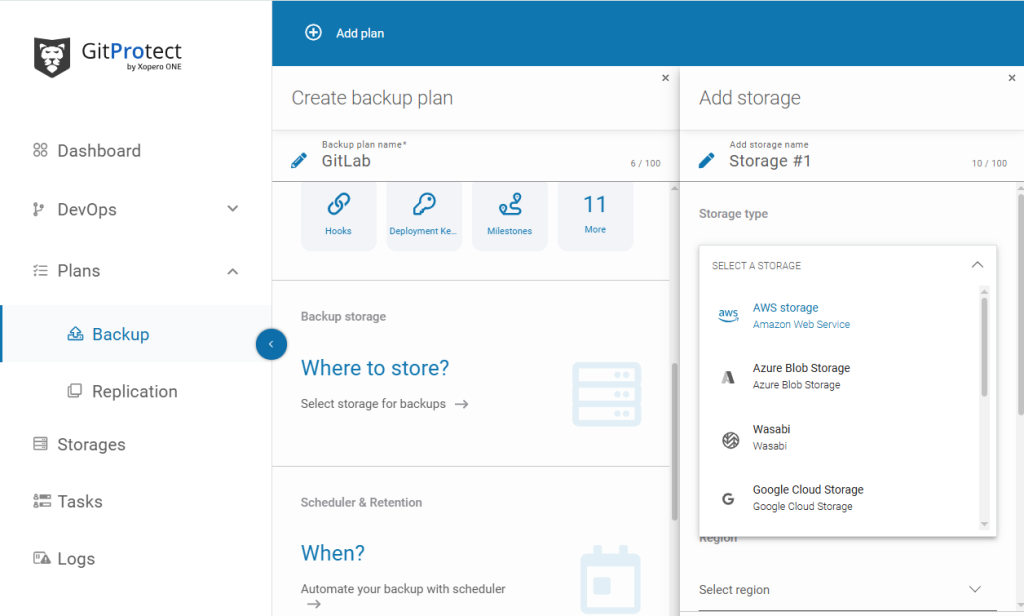

Once you decide to use GitProtect.io Cloud PRO, Cloud Enterprise, or On-Premise Enterprise, it will permit you to use GitProtect unlimited cloud storage because it is always included in the license. If your choice is the Enterprise plan, you can bring your own storage, as well – a cloud or on-premise one. Such storage as AWS S3, Wasabi Cloud, Backblaze B2, Google Cloud Storage, and Azure Blob Storage is supported by GitProtect.io. Moreover, our solution is compatible with S3, on-premise storage, including NFS, CIFS, SMB network shares, local disk resources, and hybrid or multi-cloud environments.

Add multiple storage instances and complete the 3-2-1 backup rule

You should have the possibility to add an unlimited number of storage instances with your GitLab backups. There can be cloud or on-premise, what is more, it would be perfect if you have both of them to replicate those GitLab backups between storage destinations. Why? Because it will reduce any outage or disaster risk to a minimum, and it will help you to apply the 3-2-1 backup rule, according to which you should have at least 3 copies, which are kept on 2 different storage instances with at least 1 in the cloud.

With GitProtect.io, which is a multi-storage system, you can store your git repository data:

- in the cloud (GitProtect Cloud, AWS S3, Wasabi Cloud, Backblaze B2, Google Cloud Storage, Azure Blob Storage, and any other public cloud compatible with S3),

Plan to use Amazon S3 storage as your backup target? Find out how to configure the backup policy for a seamless GitLab backup to S3 and code protection.

- locally (NFS, CIFS, SMB network shares, local disk resources),

- in a hybrid environment/multi-cloud.

There is no difference what type of license you choose, you always get GitProtect Unlimited Cloud Storage for free, therefore, you can start protecting your existing GitLab data from the moment you sign in.

Let’s look at an example of how this multi-storage system works:

Imagine you are an ordinary developer in a company where the Security and Compliance department forces all their employees to store their data on Google Cloud Storage. But… you decided to have your own backup plan and started sending your copies to your local server. One day, a huge Google outage takes place, and you need to instantly restore repository backups from three weeks ago. In this situation, all you need to do is log in to your GitProtect.io account and restore the needed data.

You can restore it to the same or a new GitLab account, to your local machines, or cross-over to another git hosting platform, no matter which one – GitHub, Bitbucket, or Azure DevOps. You will be able to peacefully continue your work in about 5 minutes.

Backup replication matters

Another important feature you should consider while choosing a backup tool is backup replication. It allows you to keep your backup copies in multiple locations to keep up with the 3-2-1 backup rule, which enables redundancy and business continuity. It is better for you to have the possibility to replicate from any-to-any data store – cloud to cloud, cloud to local, or locally with no limitations.

If you back up your data with GitProtect.io, replication becomes much easier. You can set a replication plan right from the management console. All you need to provide is the source and target storage, agent, and schedule, and that’s it – your backup replication plan is ready.

Acquire flexible retention – up to unlimited

Usually, repository providers provide from 30 to 365 days of retention by default. GitLab permits its customers to keep data for 90 days, for example. But, what if you need some data from 4 or 5 years ago? Thus, retention settings play one of the most crucial aspects when it comes to the choice of the appropriate backup solution.

You need to make sure that the features it offers meet your legal, compliance, and industry requirements. Sometimes it happens that an organization should keep all the existing data for years (to have a backup lifetime) – that depends on responsibilities – the data which is stored in your repository, time – how long it should be kept and restoration – the moment from which that data should be restored in case of failure.

It is important for you to set different retention periods for every backup plan by:

- indicating the number of copies you want to keep,

- indicating the time of each copy to be kept in the storage (those parameters should be set separately for the full, differential, and incremental backup),

- disabling rules and keeping copies infinitely.

Monitoring center – email and Slack notifications, tasks, advanced audit logs

It is clear that there can be a situation when you aren’t directly responsible for managing a backup solution, but it can be extra important for you to monitor backup performance, check on statuses, and responsibilities for a specific change in the settings to control your admins. Thus, you need a comprehensive and customized monitoring center.

And if you need to find one of the easiest ways to be aware of all notifications without logging, you should customize your email notifications settings. You should be able to configure:

- recipients (thus, only interested parties will be notified about backup statuses),

- backup plan summary details, including tasks finished with success, with warnings, canceled tasks, not started tasks, and failed tasks,

- a preferred language, which might be an advantage for some teams.

It would be ideal if you had notifications sent directly to the software you and your team use on a daily basis. With these Slack notifications, you can almost get a definite guarantee that you aren’t going to miss any vital information.

When you need to check the status of ongoing tasks and historical events, you can turn to the tasks section. It will provide you with all the necessary information about actions in progress.

Then, your backup tool should show advanced audit logs, as well. These logs contain all the information about how the applications, services, created backups, and restored data work. What is more, you can see which actions each of the admins performs, and you can even prevent any intentional malicious activity.

If you want to achieve easier and non-engaging monitoring, it will be an advantage for you to have the possibility to attach those audit logs to your external monitoring systems and remote management software via webhooks and API.

All of the above-mentioned should be accessible through a single central management console, which helps you to manage backup tasks, restore, monitor, and all system settings. You can save your time for sure with powerful visual statistics, a data-driven dashboard, and real-time actions.

GitProtect.io is the only GitLab backup and recovery software on the market that can provide you with an all-in-one solution managed with a single central management console.

Create a dedicated GitLab user account only for backup reasons to bypass throttling

For big enterprise users, the best idea is to create a dedicated GitLab personal account that will be connected to the backup tool and responsible only for backup purposes, for example, [email protected]. Why? There are two reasons. The first is security, as the user should have access only to the repositories they want to protect. At the same time, it helps to bypass throttling, because every user will have their own pool of requests to the GitLab API. Thus, every application that is associated with this account operates on the same number of requests. It allows separate users to bypass the mentioned limits and perform backup tasks without any delay or queue.

It is nice to have several GitLab users who manage the backup within your GitLab account if you have to manage a big organization and a number of repositories. In this case, if the first one exhausts the number of requests to the API, the next one will be automatically attached, and so on. Under these circumstances, even if you have an enormous GitLab environment, it will work uninterruptedly.

Backup security

GitLab backup software for SOC 2, ISO 27001 compliance

It is a well-known fact that security is the main issue nowadays. And the most sensitive data to be protected for any IT-related organization is the source code. That is the reason why your repository and metadata backup should include a number of security features to ensure data accessibility and recoverability, improve your security posture, and help you meet your shared responsibility duties. To be precise, all of that should allow you to empower your team and keep you on top of regulatory standards at the same time.

Thus, when you choose a software provider and Data Center for your service to be hosted, it should have all world-class security measures, audits, and certificates.

Here are security issues you should keep in mind and pay attention to:

- AES encryption and your own encryption key,

- encryption: in-flight and at rest,

- flexible, long-term, unlimited retention,

- the possibility to archive old, unused repositories in accordance with legal requirements,

- easy monitoring center,

- multi-tenancy, the possibility to add additional admins and assign privileges,

- Data Center strict security measures,

- ransomware protection,

- Disaster Recovery technologies.

User AES encryption in-flight and at rest

It is impossible to speak about security and data protection without proper, sustainable encryption. Backup encryption is essential for protecting GitLab data. Moreover, it is important to encrypt your data at every stage, whether before and during the transmission in-flight or in the storage at rest. In this case, you will be guaranteed that even if your data is intercepted, nobody can decrypt it.

Another issue is that your software should offer AES encryption, which stands for Advanced Encryption Standard. It is a symmetric-key algorithm. It means that the same key is used for data encryption and decryption. Many DevSecOps think about AES as unbreakable, thus, many governments and organizations use it.

If we speak about a perfect scenario, then you should have a choice on encryption strength and level, such as:

- Low, which forces the AES algorithm in OFB (OUTPUT FEEDBACK) mode, when the encryption key is 128 bits.

- Medium, which makes the AES algorithm run in OFB mode, though the encryptor is longer, 256 bits.

- High, which pushes the AES algorithm to work in CBC (CIPHER_BLOCK CHAINING) mode with the encryption key, 256 bits long.

You should always have a choice because once you decide to select an encryption method, you have to keep in mind that on the basis of it, the backup time will vary, and the load on the end device or selected functionalities can be limited. However, there should be no worries as all AES encryption levels are considered to be unbreakable.

When you configure your encryption level, you should be asked to provide a string of characters, in keeping with which your encryption key will be built. You should be the only person who knows this string, and it would be a good idea to save it in the password manager.

Most providers create encryption keys to secure their users’ data. However, if you decide to make your own encryption key, it will be much stronger. Thus, GitProtect.io gives you this possibility. Our solution enforces your data security and enables you to make up custom encryption keys.ou this possibility. Our solution enforces your data security and enables you to make up custom encryption keys.

Zero-knowledge encryption

It is important that your device knows nothing about the encryption key, thus, it should receive it only during the backup process. In this case, no one but you could decrypt it. In the security industry, this approach is called zero-knowledge encryption. Thus, when you are looking for a reliable backup solution, you should make sure that it has all AES data encryption, your own encryption key, and a zero-knowledge solution in place.

Data Center region of choice

It is principal for every security-oriented business to know how to manage and store their data. Your backup solution provider’s Data Center should be relevant to you, as it might impact coverage, application availability, and uptime. Hence, it is important that you have a choice of where you want to host your software and store your data alternatively.

With GitProtect.io you have an opportunity to make this choice in the very beginning, after signing up, because at that time you will be asked to decide where to store your management service, in an EU, US, or APAC-based Data Center.

On the other hand, no matter which Data Center you choose, the most crucial is that it should be compliant with strict security guidelines and meets such international certifications and standards as ISO 27001, EN 50600, EN 1047-2 standard, SOC 2 Type II, SOC 3, FISMA, DOD, DCID, HIPAA, PCI-DSS Level 1and PCI DSS, ISO 50001, LEED Gold Certified, SSAE 16.

Other issues you should pay attention to are physical security, fire protection and suppression, regular audits, and round-the-clock technical and network support.

It is a well-known fact that it doesn’t matter which business area you belong to; sharing responsibility helps in faster performance, increasing team morale, and permitting you to focus on development. Here is what your backup tool for GitLab should let you do:

- add new accounts,

- set roles,

- set privileges to delegate responsibilities to your team members and administrators

- have more control over access and data protection.

It is possible to reach it, but only with the help of a central management console and easy monitoring. It will help you to have access to insightful and advanced audit logs, moreover, you will know what concrete actions are performed in the system and who made those changes..

Ransomware protection

Somebody, when he hears the word “backup,” can ask how it is related to ransomware, though backup should always be ransomware-proof, as it is the final line of defense against such malware. Let’s figure out how and why. For example, GitProtect.io compresses and encrypts your data, which permits you to keep it unexecutable on the storage. In this situation, even if ransomware hits your backup data, it won’t be executed and spread on the storage.

Secure Password Manager keeps the authorization data for GitLab and the storage, and if we speak about on-premise instances, the agent receives them only for the duration of the backup task. Thus, if ransomware hits the machine our agent is on, it means that nobody will have access to the authorization of data and storage.

Unfortunately, everything can happen, and if some ransomware encrypts your GitLab data, you will have an opportunity to restore a chosen copy from the exact point in time and continue your coding without a delay.

And one more thing, to prevent data from being modified or erased and to be more ransomware-proof, backup and restore vendors offer immutable, WORM-compliant storage technology, which writes each file just once but reads it many times.

Disaster Recovery

GitLab Restore and Disaster Recovery – use cases & scenarios

You may think that a backup script for GitLab is a good idea. Though, in a long-term perspective, it will turn out to be time-consuming and it will distract your DevOps team from their core duties. Moreover, you will need your team to write a recovery script in case of a failure. Will it take a lot of time? Sure. That’s why a professional backup solution may seem reasonable and much more reliable than a GitLab backup script.

Once you decide to choose an appropriate backup and recovery software for your GitLab repos and metadata, you should be sure that it has a sustainable Restore and Disaster Recovery technology, which can respond to every possible data loss scenario. Usually, vendors provide you with data recoverability only when GitLab is down, but unfortunately, there can be much more dangerous situations.

Here we are going to have a look at how GitProtect.io prepares you for every possible scenario and what backup restore procedures you will need to do. Though, before let’s see the possible data restore options.

Recovery features in a nutshell:

- point-in-time restore,

- granular recovery of repositories and only selected metadata,

- restore to the same or a new repository or organization account,

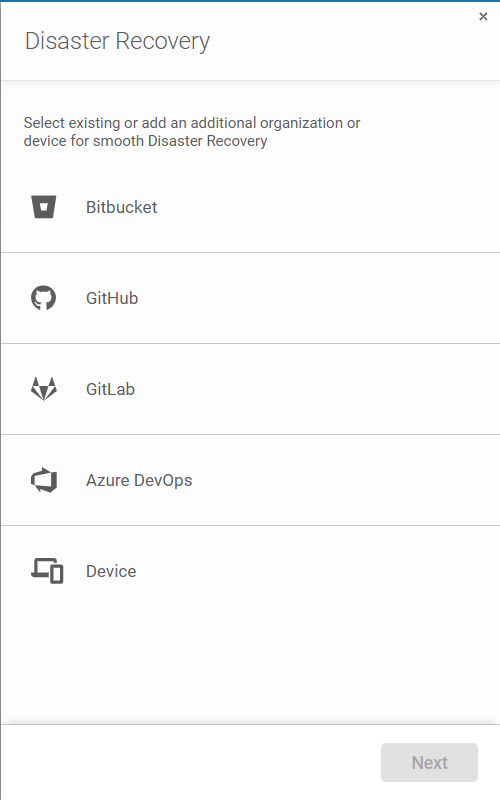

- cross-over recovery to another Git hosting platform, for example, from GitLab to GitHub, Azure DevOps or Bitbucket, and vice versa,

- easy data migration between platforms,

- restore to your local device.

The majority of backup vendors offer additional applications for your data restore. But with GitProtect.io, you don’t need them. It provides one central management console for complete backup & recovery software for your DevOps ecosystem. In other words, you can recover exactly the same version of your backup file that you need.

What about the GitLab High Availability? For the most part, it allows you to minimize the interruption of our services in case of some technical problems. Is it a backup then? A good backup should include features like: automation, encryption, versioning, data retention, recovery process, and scalability. So it doesn’t meet the definition. And there are other issues as well. GitLab recommends using HA only from a certain number of users – 3000+… Learn more

1. What if GitLab is down?

GitLab outages happen rarely, but still, they happen. And in a situation like that, it is always essential to know how to behave to provide your team with uninterrupted work. So, what to do if there is an outage? With GitProtect.io, you can instantly restore your local machine as .git to your local instance, or use a cross-over feature and restore your repository to another git hosting platform, whether it is GitHub or Bitbucket. That’s it, you can peacefully continue your work.

2. What if your infrastructure is down?

Until you have a 3-2-1 backup rule, you shouldn’t worry about your infrastructure going down. Nowadays, this rule has already become a widely adopted standard in data protection. According to this rule, you should have at least 3 copies saved on 2 different storage devices, at least 1 of which is in the cloud.

GitProtect.io provides its customers with a multi-storage system that permits them to add an unlimited number of storage instances, including on-premise, cloud, hybrid, or multi-cloud, and make backup replication among them. Moreover, with this solution, you will be offered free cloud storage in case you seek a reliable, second backup target. Thus, you will always be sure that even if your backup storage is down, you can easily restore all the needed data from any point in time from your second storage.

3. What if GitProtect’s infrastructure is down?

GitProtect.io is a product of Xopero Software, a backup & restore company, thus, it lives from data protection and, of course, is ready for every potential outage scenario, especially the one that harms its infrastructure. In case if GitProtect.io’s environment is down, you will be shared the installer of your on-premise application. Thus, your only task will be to log in, assign your storage where your copies are stored, and use all data restore and Disaster Recovery options the solution provides.

Visual learner? Don’t miss our our video on DR scenarios:

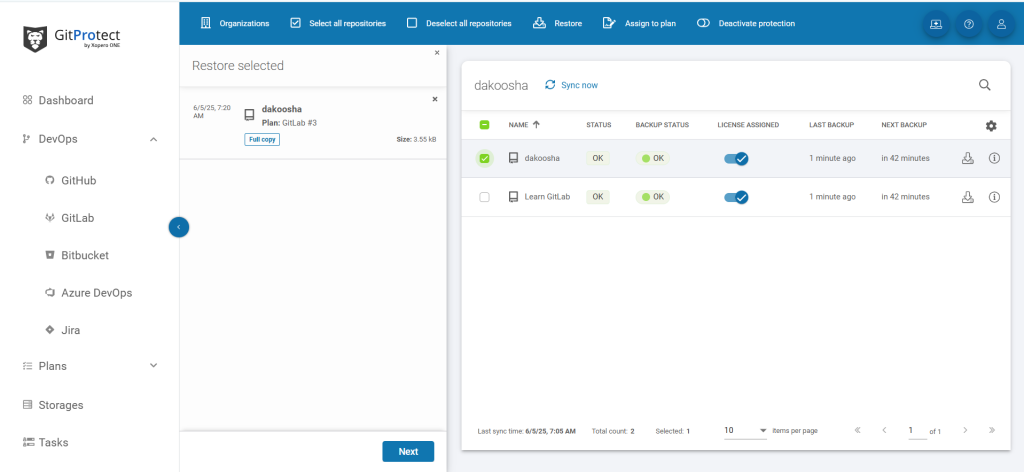

Restore multiple git repositories at a time

There exist a lot of situations when you need to instantly restore all your entire Git environment. And the best help here you can get from Restore and Disaster Recovery technologies. If you have a backup plan, in case of a failure, outage, or downtime, you can quickly restore your data. The easiest way to do it is to restore multiple GitLab repositories at a time. All you need to do is to choose the repositories you want to restore, look at the most recent copies, or assign them manually and restore them to your local machine. Another option is cross-over recovery to another hosting service provider. All of that will make your Disaster Recovery plan both easy and fast, and efficient.

Eliminate data loss risk and ensure business continuity with the first TRUE Disaster Recovery software for GitLab.

Point-in-time restore – don’t limit yourself to the last copy

It is a common fact that human errors are one of the most common reasons for cybersecurity incidents and data loss. You never know where the risk is hidden, so there is no difference in the case of git repository backup, as it can be from the intentional or unintentional repository or branch deletion to HEAD overwrite. As soon as you define the exact state and date you want to roll out, it will be important to have the possibility to restore exactly the same version of the backup file from some specific or defined moment in time. Here, it is better to mention that most backup vendors offer their customers to restore only the latest copy or the copy from up to 30 days prior (the retention limitations can be a real problem then).

But what should you do if you notice some devastating changes in your source code, for example, after 50 days of them occurring? In a situation like that, you will need to go back to some extra time prior. Thus, you should be sure that your backup solution provides you with point-in-time restore and unlimited retention options. It doesn’t matter when the mistake or threat was found, you can use backup and Disaster Recovery software for GitLab archive reasons, legal compliance assurance, and GitLab storage limitations to be overcome.

Restore directly to your local machine

Sometimes those who work on GitLab in SaaS want to restore their copies to their local machine. It can happen due to a weak internet connection, cloud infrastructure downtime, or service outage. Thus, together with other restore possibilities, your backup tool should permit you to restore your entire git environment to the local machine.

At the same time, it’s worth remembering that it is always great when your software provides you with some additional options, such as restoring to the same or a new GitLab repository, and cross-over recovery to another git hosting service. Why? Because it is hard to predict which recovery opportunities you may need in the future.

Don’t overwrite repositories during the restore process

If you need to restore your repository from a copy, it is better to have it restored as a new repo. Why not overwrite the original one? Because, in the future, you may need to use your original one, for example, for tracking changes. Moreover, it enlarges your security and gives you full control over your data, making you a decision-maker who decides when to keep or delete the data.

Intuitive Management

UX and clean design are a key to successful backup management

As you know, if something is natively clear, the whole process goes faster. What to say when it concerns your development process, where all the DevOps, management, and production procedures should be performed without any delay? The backup of your GitLab environment should be automated. We have already listed numerous advanced backup features for your GitLab environment. Moreover, in the previous chapters, you have already received a complete backup guide to protect your GitLab repositories and metadata. Now, we are going to show you how GitProtect.io deals with user experience for security.

Well, how to achieve that automation? Is it a matter only related to available functionalities? Definitely not. One of the main issues is how to implement all those features fast and efficiently. While designing an interface, our product team wanted to rule out accidental mistakes and human errors as much as possible. Using GitProtect.io, customers are able to understand and use the application immediately. There is no need for them to consciously think about how to add their GitLab environment or set up a GitLab backup plan. UX for security? Yes, successfully implemented.

Moreover, GitProtect.io is available as a SaaS (and on-premises too) and is accessible from a web browser. So, you don’t need to install an application directly on your computer to make GitLab backups. And, in case there is a failure, you can recover data with that same ease.

For your convenience, the interface is divided into a few key areas, and we are providing detailed summaries, simplified views, and data-driven dashboards. You can easily switch from one view to another to manage your account and:

- see what is happening with your GitLab repositories and metadata,

- set a replication plan,

- assign storage,

- check how the tasks are performed,

- and track the logs.

Use-Case: A step-by-step GitLab backup tutorial

How do I back up my GitLab?

First, you need to set up your GitProtect.io’s account, which is probably the easiest thing you have ever done. You need to provide your email address or use your GitLab, GitHub, Atlassian, Google, or Microsoft SSO to register.

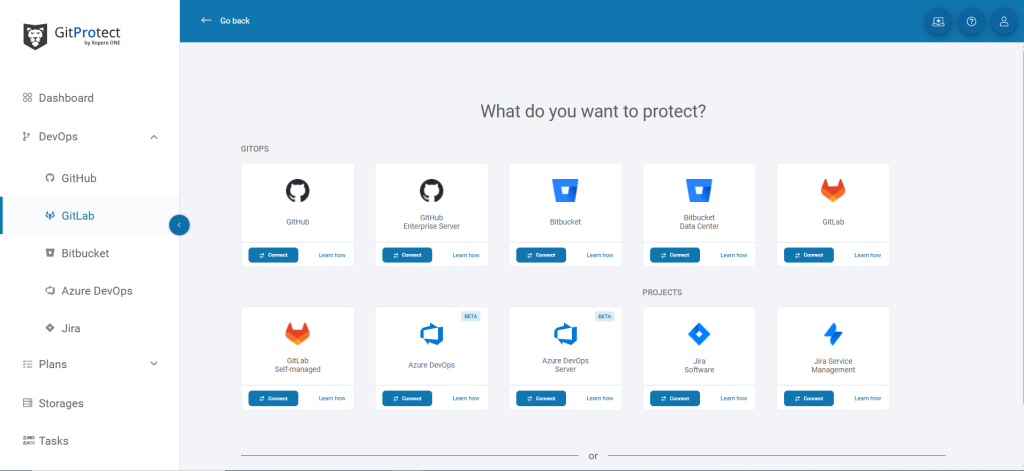

After your successful registration, you will need to connect your GiLab organization by logging in to your GitLab account and setting permissions to start performing automated backups – predefined or customized.

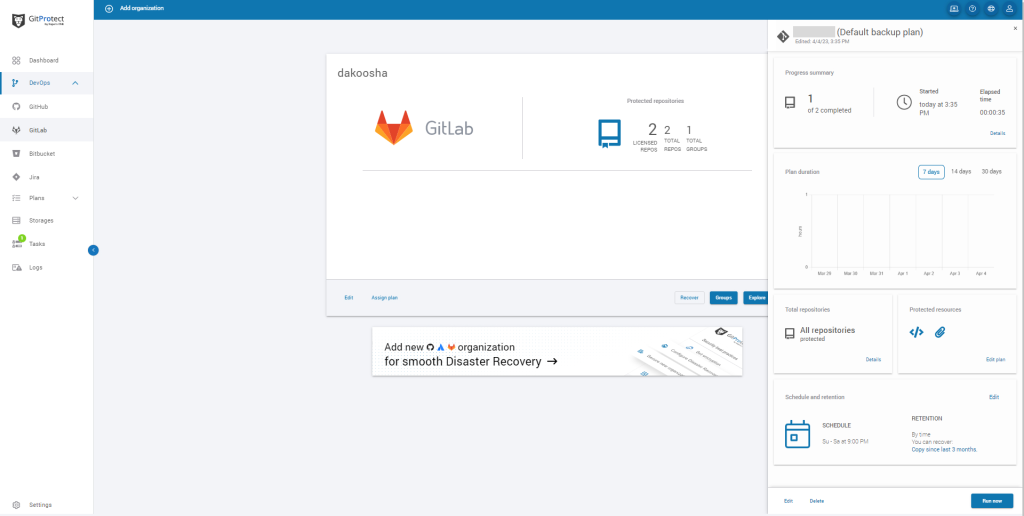

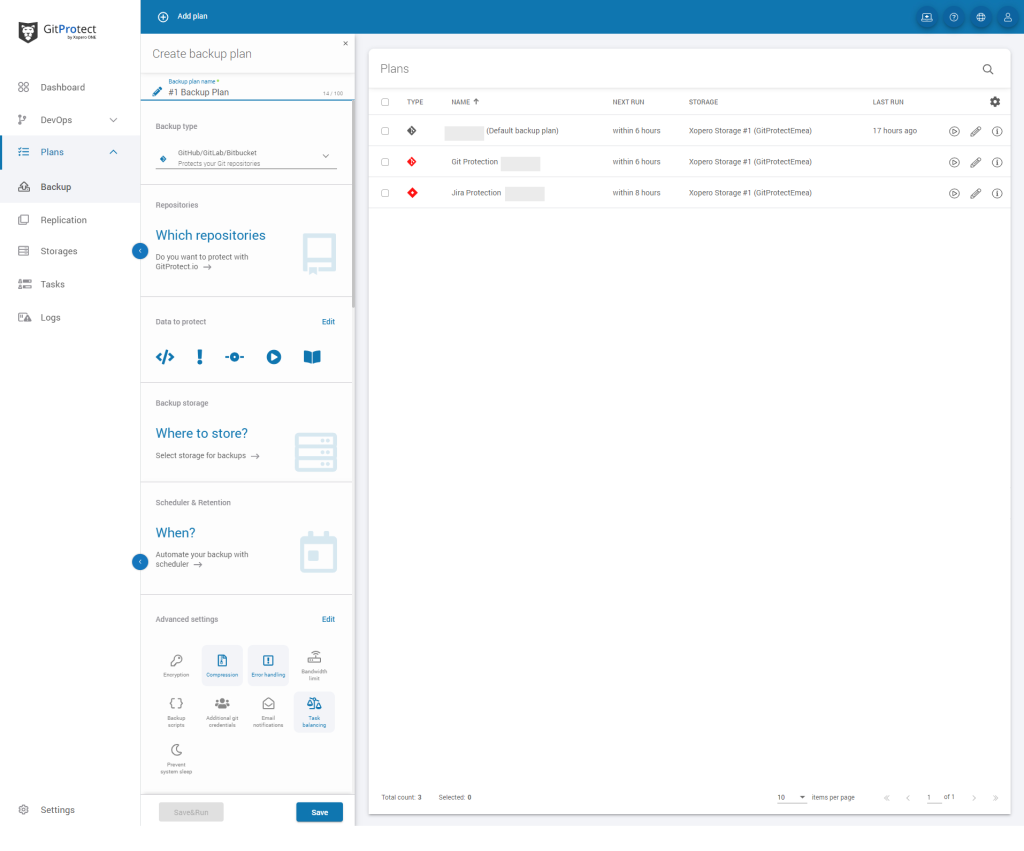

Now you are linked and ready to create your first backup plan. And there you have a few options:

- you simply run a predefined backup plan if you don’t want to create your own. In this case, you will perform daily backups of all repositories and metadata to the included free cloud storage (you will choose a Data Center location -EU/US/AUS – during the sign-up process),

- or you can set a custom backup plan for your GitLab instance, where you will need to define: the plan name, backup type, data to protect (all or chosen repositories and metadata), backup storage, scheduler & retention, and advanced settings (like encryption, error handling, bandwidth limit, task balancing and more).

Where are GitLab backup files stored?

Once you set up your account with GitProtect.io, by default, you get unlimited GitProtect Cloud Storage, which permits you to store your data within 2 regions: either in EU, US, or APAC region. Though if you want to use your own storage, you can easily assign it – both local and cloud. GitProtect supports AWS Storage, Azure Blob Storage, Backblaze B2, GCS, and all S3-compatible clouds as well as NFS, CIFS, SMB, and local disk resources.

GitProtect is a multi-storage system that allows you to add as many storage instances as your company requires. Moreover, it allows you for storage replication and keeps your efficient, consistent copies in multiple locations to follow the 3-2-1 backup rule, enabling redundancy and business continuity. You can replicate from any to any data store – cloud to cloud, cloud to local, or locally with no limitations.

How to restore a repository in GitLab?

Once you set up a backup plan for your GitLab ecosystem with GitProtect, the process of restoring will take you only a few clicks:

- choose the backup plan you want to restore,

- pick up “GitLab” (if you want to restore your GitLab data to the same account),

- that’s it – your data is restored to the same

Custom backup plan and additional settings

After assigning the storage (or choosing the included cloud), it’s time to set the schedule and data retention settings. You can choose a Basic – the simplest type of rotation scheme, or a Grandfather-Father-Son (GFS), which stands for:

- Grandfather – full copy performed once a month,

- Father – differential GitLab backups run every week

- and Son – incremental copies are performed every day.

Then you can choose additional options to customize your backup plan and make your GitLab backups more reliable:

- encryption and data security – to protect your data in-flight and at rest with a unique encryption key,

- compression – to compress and reduce the size of the copy,

- error handling – to specify how to handle possible errors,

- bandwidth limit – to reduce network usage and limit network speed,

- triggering backup tasks – to run your backups any time manually without waiting when your backup is performed by a scheduler,

- and more…

How do I move GitLab data to another hosting service?

We have already mentioned that GitProtect.io has a feature called cross-over recovery. It’s the possibility to backup your entire GitLab instance and recover it to an absolutely different service provider, GitHub or Bitbucket. It works simply. All you need to do is choose what GitLab repositories and metadata you want to copy. Then select a new or existing Bitbucket, GitHub, or Azure DevOps organization as a data recovery destination, and that’s all, your GitLab to Bitbucket or GitHub migration is complete.

Conclusion

Nowadays, there are a lot of threats your GitLab environment can face. There can be human mistakes, malware, or outages. Yes! Outages can happen even to GitLab, for example, one of the biggest GitLab outages made user accounts unavailable for 6 hours (and some of them were deleted). Thus, thinking about and having a reliable backup plan in place is vital for your uninterrupted coding and business continuity.

Though creating your custom backup plan is just a part of your successful backup strategy. When you make your GitLab backups, you should know exactly how you are going to behave in case of a disaster. It’s worth knowing all the actions you need to take to make your copy instantly recoverable and continue coding without any interruption.

Before you go:

🌟 See in practice how GitProtect helps Turntide Technologies, named “The Next Big Thing In Tech” and “A World Changing Idea”, back up and recover the company’s GitLab and Jira data

📅 Schedule a live custom demo and learn more about GitProtect backups for your GitLab data protection

📌 Try GitProtect backups for your GitLab ecosystem to secure your DevOps data and ensure your business continuity

✍️ Subscribe to GitProtect DevSecOps X-Ray Newsletter and always stay up-to-date with the latest DevSecOps insights